Though you may be familiar with TCP and UDP as a developer, do you understand what they are, how they differ, and how they impact software systems architecture?

Overview of computer networks

Before introducing TCP and UDP, we must first describe how computers and the internet communicate.

When you use your computer or smartphone to browse the web or launch some apps like Snapchat…, your device must send the server the requested data. The server will then return the requested resource to you.

But how are two computers able to interact if they’re thousands of miles or even hundreds of kilometers apart?

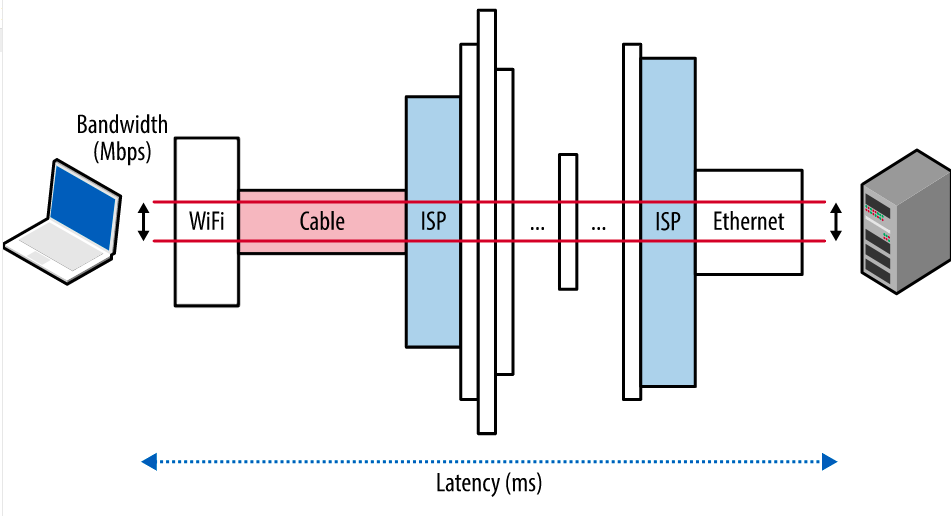

Obviously, the data will be sent over the internet, specifically from the wifi wireless network or RJ45 network cable to the router, and then via fiber optic cable or ADSL cable (which is probably no longer used).

The time for data to travel through the internet to communicate between two computers is referred to as Network Latency, as illustrated in the figure below:

A computer network system is made up of a wide variety of parts, including hardware, software, chips, and circuits. It will be very difficult to implement software for computer communication through this infrastructure.

The computer network model was created to make this simpler. The OSI Model and the TCP/IP Model are the two computer network models. The TCP/IP model can be separated into 4 or 5 layers, but the OSI model has 7 layers.

TCP and UDP are the two protocols utilized in the Transport layer in the two architectures mentioned above.

TCP/IP

The Transmission Control Protocol (TCP) comes first.

When using TCP, you can be sure that each byte sent and received will be exact replicas of one another and arrive at the other end in the same order. Retransmission of lost data, congestion control, etc. will be handled by TCP.

To accomplish the aforementioned, TCP has a procedure known as the Three-way handshake, sometimes known as the 3-way handshake, whose purpose is to establish a connection.

Three-way handshake

The purpose of this handshake is to exchange package sequence numbers between the client and server in order to create a secure connection on both ends. For security purposes, these sequence numbers will be generated randomly.

As the term implies, there are 3 steps to a three-way handshake:

- SYN: The client picks a number at random, x, and transmits an SYN (Synchronize Sequence Number) packet to the server. This packet also contains additional flags or options for the TCP connection.

- SYN-ACK: After receiving the SYN from the client, the server increases x by 1 and chooses a new random number, y, before giving TCP more options and sending the response back to the client.

- ACK: The client will keep increasing x and y by 1 unit before delivering an ACK (Acknowledgement Sequence Number) packet to the server to complete the handshake.

The period between the beginning of the client’s request and getting the first data packet that is returned is referred to as the “round-trip time,” which does not include the period of time it takes to receive the full set of data (RTT).

In the aforementioned case, the first roundtrip took 28 ms to complete, whereas the second roundtrip needed 84 ms. The number of next round trips will be raised in line with the amount of data being transported.

After the connection has been made, the two sides will communicate with one another message by message to send data and confirm the data has been transported successfully, much like the three-way handshake procedure. The number of RTTs increases with the amount of data.

For instance, if I try to record TCP messages using the freeware Wireshark, the outcomes are as follows:

Congestion control

Congestion can occur when data moves through network layers unchecked. Similar to a road, if there aren’t any lanes, signs, or lights at the intersection, there will almost surely be traffic jams.

Similarly, TCP will also handle congestion for the connection it has established with a three-way handshake. The core part of the congestion management mechanism is the congestion window, also known as CWND (temporarily regarded as the data transmission window), and there are 3 phases to the concept of congestion policy.

Because the size of CWND is proportional to the amount of data sent in 1 RTT, the three phases of congestion control revolve mainly around adjusting the value of CWND:

Slow Start Phase: exponential increment – In this phase after every RTT the congestion window size increments exponentially.

Initially cwnd = 1 After 1 RTT, cwnd = 2^(1) = 2 2 RTT, cwnd = 2^(2) = 4 3 RTT, cwnd = 2^(3) = 8

Congestion Avoidance Phase: additive increment – This phase starts after the threshold value also denoted as ssthresh. The size of cwnd(congestion window) increases additive. After each RTT cwnd = cwnd + 1.

Initially cwnd = i After 1 RTT, cwnd = i+1 2 RTT, cwnd = i+2 3 RTT, cwnd = i+3

Congestion Detection Phase: multiplicative decrement – If congestion occurs, the congestion window size is decreased. The only way a sender can guess that congestion has occurred is the need to retransmit a segment. Retransmission is needed to recover a missing packet that is assumed to have been dropped by a router due to congestion. Retransmission can occur in one of two cases: when the RTO timer times out or when three duplicate ACKs are received.

- Case 1: Retransmission due to Timeout – In this case, the congestion possibility is high.

- (a) ssthresh is reduced to half of the current window size.

(b) set cwnd = 1

(c) start with the slow start phase again.

- (a) ssthresh is reduced to half of the current window size.

- Case 2: Retransmission due to 3 Acknowledgement Duplicates – In this case congestion possibility is less.

- (a) ssthresh value reduces to half of the current window size.

(b) set cwnd= ssthresh

(c) start with congestion avoidance phase

- (a) ssthresh value reduces to half of the current window size.

A TCP connection can be compared to a strategy for a caravan of people to cross a multilane road. To allow other vehicles to pass, initially, only one lane was opened for the safest.

People will gradually open more lanes on the road if it is not already congested, and beyond a certain point, the opening of more lanes will slow down. The two phases of a slow start and congestion avoidance are described here.

People will need to limit the number of lanes if opening too many causes congestion, for example, TCP will cut the number of lanes in half, and the speed of additional lanes will be chosen on a case-by-case basis. The phase of congestion detection is right now.

Error control mechanism

TCP enforces error control based on the following:

- Checksum: The checksum information is present in every TCP segment. As a result, the receiver will label the segment as corrupted if it does not match the checksum.

- Acknowledgment: Each time the client delivers a segment, the server answers with an ACK to verify that the segment has arrived. This is similar to responding to an ACK message in a three-way handshake.

- Retransmission: Segment resending will occur in 2 situations:

- Time-out case: If the client does not get an ACK packet from the server within a predetermined amount of time (depending on RTT), the client will resend that timeout segment.

- Receive the same ACK message twice: This packet will be resent if one has already been delivered but the client keeps receiving three ACK messages.

Pros and Cons

By now, we should have been able to identify the main pros and cons of TCP, right?

Pros:

- Reliable transmission: TCP guarantees that all packets are received by the receiver and retransmits any lost packets. This makes TCP a reliable protocol for applications that require the delivery of all packets, such as email, file transfer, and web browsing.

- Error checking: TCP provides a checksum mechanism to detect any errors that occur during transmission, ensuring that the data received is the same as the data sent.

- Flow control: TCP uses a sliding window mechanism to control the flow of data between the sender and receiver, preventing the receiver from being overwhelmed with too much data at once.

Cons:

- Requires more bandwidth because packets are heavier.

- Slower, this is the price to pay for the advantages mentioned above:

- With TCP, it takes at least 2 round trips for the handshake, and then it takes a few more round trips to send and receive data, which will take more time, right?

- In addition, we have to consider the time for the server to process the information before returning it. Slow response time is obviously inevitable. I did a small test using the ping command to test the latency to a website called freecodecamp.org, the latency is about 44ms.

Well, you can see that one round trip takes approximately ~45ms. When losing more than 2 RTTs for the three-way handshake, and many more for sending and receiving data, the response time for one request will increase significantly. I continued to use curl to test the response time, which averages at least 0.9s = 900ms = ~20 RTTs. Clearly, the response time has increased significantly.

Overall, both TCP and UDP have their advantages and disadvantages, and the choice between them depends on the requirements of the application.

My command:

curl -X GET -w "total time: %{time_total}\n" https://freecodecamp.org | grep "total time:"Or you can use your chrome inspector:

In practice, there are measures to reduce the RTT for TCP, which are used by big players in the tech industry, but they are not discussed in this article.

To reduce response time, the browser may use some techniques. And while measuring response time may not be fair for TCP and HTTPS, it can tell you why TCP is slow!

UDP – User Datagram Protocol

To understand how UDP – User Datagram Protocol works, we need to understand IP – short for Internet Protocol. This protocol is located in the network layer, below both TCP and UDP which are the main subjects of this article.

The main task of the IP layer is to distribute datagrams from one computer to another through their addresses (i.e., IP addresses). You can think of transmitting data via IP as sending a package through the postal service. IP encapsulates information such as the sender and receiver addresses and other parameters before sending, while the main content of the message is contained in the package, which is the payload.

IP does not notify when a message is successfully delivered to its destination nor provide any feedback in case of errors. Clearly, IP is not reliable for the higher layers.

Therefore, error checking and retransmission of lost information are managed by higher layers in the network model. The TCP protocol with its three-way handshake mechanism is one face that ensures these things, which is why TCP is a reliable protocol.

Returning to UDP, UDP encapsulates packets in its own way, adding four new fields: source port, destination port, package size, and checksum. Of these four fields, checksum and source port are optional fields, and in fact, the IP layer already provides a checksum.

Benefits and drawbacks of UDP

Benefits:

- Fast and lightweight: As it does not require error control and multiple round trips like TCP, UDP is very fast and lightweight.

- Supports broadcasting: Supports sending data from one server to many other machines on the network.

- Requires fewer resources than TCP.

Drawbacks:

- Unreliable: This does not guarantee that the data will be delivered to its destination.

- No error control, retransmission, congestion control mechanisms, etc…

How are UDP and TCP used?

Usages of TCP

TCP is applied to tasks that do not require very fast speeds, but require reliable data transmission, such as:

- File transfer protocols: FTP

- Web browsing: HTTP

- Email protocols: IMAP

- Remote desktop: RDP

- …

Usages of UDP

With the advantages of being fast, lightweight, and supporting broadcast, UDP can be used in fields such as:

- Media streaming: For example, streaming video, where a few lost frames can be acceptable in exchange for faster loading speed.

- Gaming: For real-time online games where frequent data upload is required, such as for movements and actions of characters, UDP is a better fit than TCP.

- DNS Lookup

- Video conferencing

- Online voice chat

- …

Summary With this article, I hope you have a better understanding of the two classic protocols, TCP and UDP, as well as the strengths and weaknesses of each.

The information in this article is referenced from various sources, especially the book “High-Performance Browser Networking”, which is available for free here.

References: